Welcome :)

13

May 21

Update: Companion Pi Touchscreen

My original post on the Companion Pi Touchscreen was written not long after being pleased at the results of getting it to work well enough to use in production situations.

I followed that up with the addition of a script to monitor the temperature of the Pi.

I thought it might be worth an update after a number of additions to the way I run the companion Pi software on this device, and some peripherals.

Streamdeck XL

After saving up, I now own a Streamdeck XL. I found that I still craved physical buttons that I could find without having to look at the device. It is plugged in to the USB port of the companion Pi and is running through a 10 meter active USB cable which boosts the signal for a long cable run. I don’t really need 10 meters, but I did want a bit more flexibility for being able to site the Streamdeck on a trolley that carries our main presenter computer.

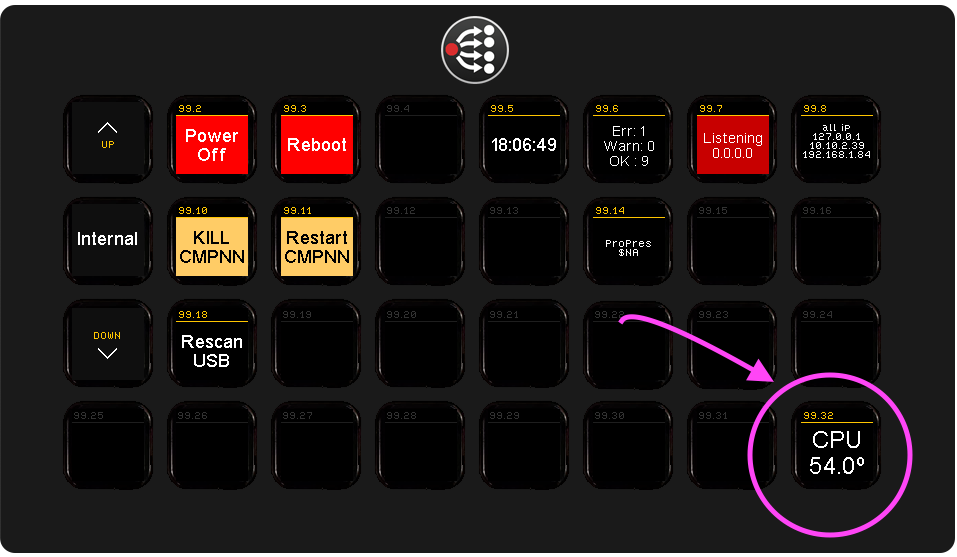

The Streamdeck is powered by the Pi, and is configured in companion as a separate instance so the touch screen and streamdeck can show different button pages. I now mostly have the Pi touch screen showing a diagnostic page of buttons allowing me to see at a glance that everything is connected and running OK.

DMX host

The Pi also acts as a DMX host, running an Open Lighting Architecture host that allows me to control an Enttec DMX Pro MkII via artnet.

This allows me to use companion Pi as well as other software to control some DMX enabled lighting over the network.

Second screen

The Pi also has a second screen attached and with the aid of some custom scripts can play youtube clips at the touch of a button. I use this for some animated backgrounds for zoom calls where the Atem controls the greenscreen.

I do have one problem with the second screen… when it is attached it halts the boot of the pi until it is detached. So I don’t have it atached now until after booting it, which is not ideal. Hopefully I will find an answer at some point.

But why is the Pi doing all these things?

The Pi is now part of a ‘flypack’ which acts as a portable hub for my increasingly complex puppet shows! I want to be able to control everything from a single point – which is often behind a puppet theatre curtain, and with the aid of companion, qlab and DMX, we can put on quite a nice show. Or we will be able to once we can get out of the house again after lockdown.

I’ll write more about the flypack and it’s design in a future post.

3

Sep 20

RPi Temperature Monitor

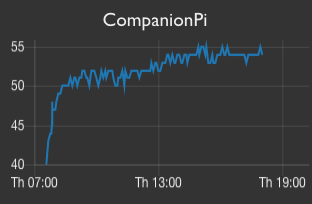

I wanted a way to track temperature changes on my Raspberry Pis, especially the one that was acting as a Touch Screen for my Bitfocus Companion host. It is in a small case, and I had recently installed a heatsink and fan to aid with heat disipation and so wanted to see how much of a difference it made over time. (Spoiler: The cooling is keeping the cpu core about 20ºC less than it would have been without it!)

I already had Node Red in my network which is monitroing all sorts of things including temperature sensors, and it does this in tandem with an MQTT broker. So all I needed to be able to monitor my Raspberry Pis temperature and have it display anywhere I wanted, was to send the temperature value to MQTT and then have various clients subscribe to it.

I adapted a small python script from thingsmatic which I am using to monitor the CPU temperature on two of my suit of RPis. I say adapted – I just had to make sure I was using the full path for all executables used by the script and also make sure that the python module for sending the MQTT message was installed.

Architecturally it looks like this:

- The Raspberry Pi runs a script via the cron. I am monitoring every 5 mins.

- The script calls the

vcgencmdto get the temperature and publishes this to an MQTT broker on the network. - Node Red is subscribing to the topic and adds updates to a line chart in the dashboard as they arrive.

- Bitfocus Companion has an MQTT plugin which means I can subscribe to the topic that will contain the CPU temperature and update a variable when that value is seen on the network. I also added an action to the buton that would run the temperature check script on the Pi at an arbitrary point.

Main disavantages are that this solution will not work when I leave my home network. But it’s rare that I do that anyway these days!!